Now admittedly I did this to myself as I was tracking down a root cause on how operations engineers were putting hosts back into production clusters without a properly functioning vxlan. Apparently the easiest way to get a host into this state is to repeatedly move a host in and out of a production cluster to an isolation cluster where the NSX VIB module is uninstalled. This is a bug that is resolved in vCenter 6 u3, so at least there’s that little nugget of good news.

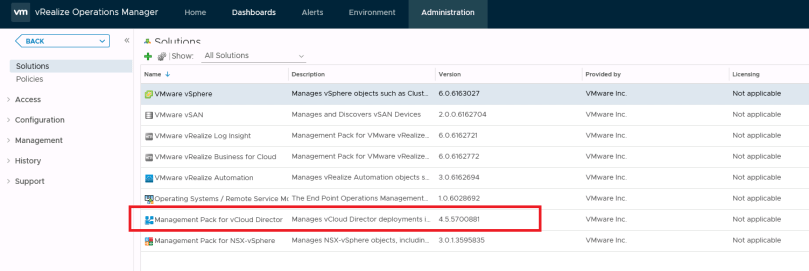

Current production setup:

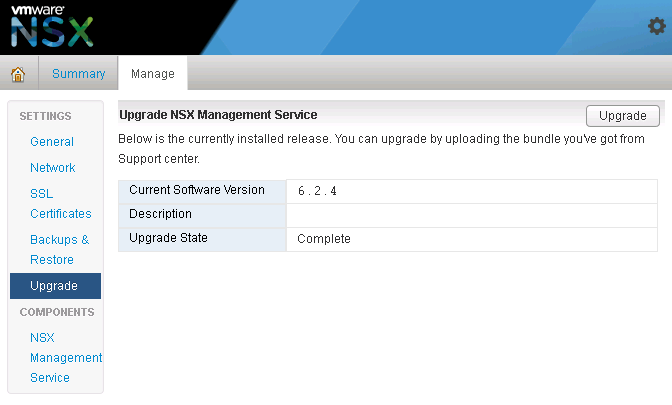

- NSX: 6.2.8

- ESXi: 6.0.0 build-4600944 (Update 2)

- VCSA: 6 Update 2

- VCD: 8.20

So for this particular error, I was seeing the following in vCenter events: “VIB Module For Agent Is Not Installed On Host“. After searching the KB articles I came across this one KB2053782 “Agent VIB module not installed” when installing EAM/VXLAN Agent using VUM”. Following the KB, I made sure my update manager was in working order, and even tried following steps in the KB, but I still had the same issue.

- Investigating the EAM.log, and found the following:

1-12T17:48:27.785Z | ERROR | host-7361-0 | VibJob.java | 761 | Unhandled response code: 99

2018-01-12T17:48:27.785Z | ERROR | host-7361-0 | VibJob.java | 767 | PatchManager operation failed with error code: 99

With VibUrl: https://172.20.4.1/bin/vdn/vibs-6.2.8/6.0-5747501/vxlan.zip

2018-01-12T17:48:27.785Z | INFO | host-7361-0 | IssueHandler.java | 121 | Updating issues:

eam.issue.VibNotInstalled {

time = 2018-01-12 17:48:27,785,

description = 'XXX uninitialized',

key = 175,

agency = 'Agency:7c3aa096-ded7-4694-9979-053b21297a0f:669df433-b993-4766-8102-b1d993192273',

solutionId = 'com.vmware.vShieldManager',

agencyName = '_VCNS_159_anqa-1-zone001_VMware Network Fabri',

solutionName = 'com.vmware.vShieldManager',

agent = 'Agent:f509aa08-22ee-4b60-b3b7-f01c80f555df:669df433-b993-4766-8102-b1d993192273',

agentName = 'VMware Network Fabric (89)',

- Investigating the esxupdate.log file and found the following:

bba9c75116d1:669df433-b993-4766-8102-b1d993192273')), com.vmware.eam.EamException: VibInstallationFailed 2018-01-12T17:48:25.416Z | ERROR | agent-3 | AuditedJob.java | 75 | JOB FAILED: [#212229717] EnableDisableAgentJob(AgentImpl(ID:'Agent:c446cd84-f54c-4103-a9e6-fde86056a876:669df433-b993-4766-8102-b1d993192273')), com.vmware.eam.EamException: VibInstallationFailed 2018-01-12T17:48:27.821Z | ERROR | agent-2 | AuditedJob.java | 75 | JOB FAILED: [#1294923784] EnableDisableAgentJob(AgentImpl(ID:'Agent:f509aa08-22ee-4b60-

- Restarting the VUM services didn’t work, as the VIB installation would still fail.

- Restarting the host didn’t work.

- On the ESXi host I ran the following command to determine if any VIBS were installed, but it didn’t show any information: esxcli software vib list

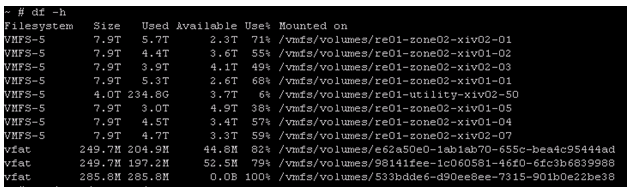

Starting to suspect that the ESXi host may have corrupted files. Digging around a little more, I found the following KB2122392 “Troubleshooting vSphere ESX Agent Manager (EAM) with NSX“, and KB2075500 “Installing VIB fails with the error: Unknown command or namespace software vib install”

- esx-vxlan

- esx-vsip

- esx-dvfilter-switch-security

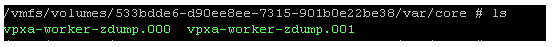

Tried install them manually, but got errors indicating corrupted files on the esxi host. Had to run the following commands first to restore the corrupted files. **CAUTION – NEEDED TO REBOOT HOST AFTER THESE TWO COMMANDS**:

- # mv /bootbank/imgdb.tgz /bootbank/imgdb.gz.bkp

- # cp /altbootbank/imgdb.tgz /bootbank/imgdb.tgz

- # reboot

Once the host came back up, I attempted to continue with the manual VIB installation. All three NSX VIBS successfully installed. Host now showing a healthy status in NSX preparation. Guest introspection (GI) successfully installed.

You must be logged in to post a comment.