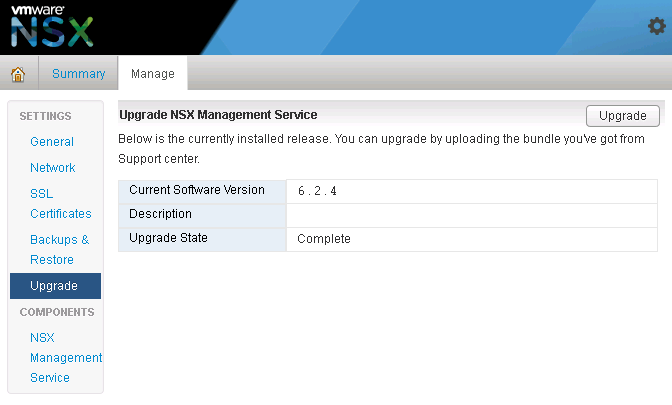

I realize that writing up this blog post now, may be irrelevant considering most if not all VMware customers are well beyond NSX appliance 6.2.4. But some folks may still find the information shared here still relevant. At the very least the instructions for restarting the bluelane-manager service on the NSX appliance is still something handy to keep in your Rolodex of commands.

There’s an interesting bug in versions of the NSX appliance ranging from versions 6.2.4 – 6.2.8, where the utilization slowly climbs, eventually maxing out at 100% CPU utilization after few hours. For my environment, we had vSphere version 6, and roughly 60 hosts that were also on ESXi 6. We were also using traditional SAN storage on FCOE. In this case a combination of IBM XIV, and INFINIDATs. In most cases, we could just restart the NSX appliance, which would resolve the CPU utilization issue, however sometimes within two hours, the CPU utilization would climb back up to 100% again. When the appliance CPU maxed out, after a few seconds the NSX manager user interface would typically crash.

The Cause: (copied from KB2145934)

“This issue occurs when the PurgeTask process is not purging the proper amount of job tasks in the NSX database causing job entries to accumulate. When the number of job entries increase, the PurgeTask process attempts to purge these job entries resulting in higher CPU utilization which triggers (GC) Garbage Collection. The GC adds more CPU utilization.”

The only problem with the KB, is that our environment was currently on 6.2.4, so clearly the problem was not resolved.

In order to buy ourselves some time, without needing to restart the NSX appliance, we found that simply restarting a service on the NSX appliance called ‘bluelane-manger‘, had the same affect, but this was only a work around.

You can take the following steps to restart the bluelane-manager service:

- SSH to the NSX Manager using the ‘admin’ account

- Type

en

- Type:

st en

- When prompted for the password, type:

IAmOnThePhoneWithTechSupport

- To get the status of the bluelane manager service type:

/etc/rc.d/init.d/bluelane-manager status

- To restart the bluelane-manager service, type:

/etc/rc.d/init.d/bluelane-manager restart

Now after a few seconds, you should notice that the NSX appliance user interface has restored to normal functionality, and you can log in, and validate that the CPU has fallen to normal usage.

What made the issue worse, was the fact that we had hosts going into the purple diagnostic screen. I’m not talking one or two here. Imagine having over 20 ESXi hosts drop at the same time, during production hours, and keep in mind that all of these hosts were running customer workloads….. If you’ll excuse the vulgarity, that certainly has a pucker factor exceeding 10. At the time, I was working for a service provider running vCloud Director. The customers were basically sharing the ESXi host resources. We were also utilizing VMware’s Guest Introspection (GI) service, as we also had trend micro deployed, and as a result most customers were sitting in the default security group.

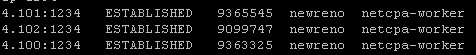

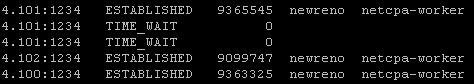

Through extensive troubleshooting with VMware developers, at a high level we determined the following: Having all customer VMs in the default NSX security group, every time a customer VM powered on or off, was created or destroyed, vMotioned, replicated in or out of the environment, all had to be synced back to the NSX appliance, which then synced with the ESXi hosts. Looking at the at specific logs on the ESXi hosts that only VMware had access to, we saw a backlog of sync instructions that the hosts would never have time to process, which was contributing to the NSX appliance CPU issue. This was also causing the hosts to eventually purple screen. Fun fact was that by restarting the hosts we could buy ourselves close to two weeks before the issue would reoccur, however, performing many simultaneous vMotions would also cause 100% CPU on the NSX appliance, which would put us into a bad state again.

Thankfully, VMware was currently working on a bug fix release at the time NSX 6.2.8, and our issue served to spur the development team along in finalizing the release, along with adding a few more bug fixes they had originally thought was resolved in the 6.2.4 release.

Most relevant to our issues that we faced were the following fixes:

- Fixed Issue 1849037: NSX Manager API threads get exhausted when communication link with NSX Edge is broken

- Fixed Issue 1704940: You may encounter the purple diagnostic screen on the ESXi host if the pCPU count exceeds 256

- Fixed Issue 1760940: NSX Manager High CPU triggered by many simultaneous vMotion tasks

- Fixed Issue 1813363: Multiple IP addresses on same vNIC causes delays in firewall publish operation

- Fixed Issue 1798537: DFW controller process on ESXi (vsfwd) may run out of memory

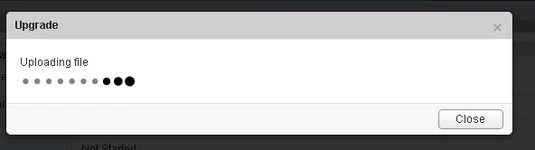

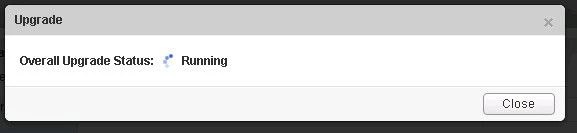

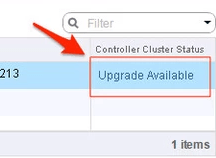

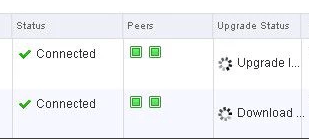

Upgrading to NSX 6.2.8 release, and rethinking our security groups, brought stability back to our environment, although not all above issues were completely resolved as we later found out. In short most “fixes” were really just process improvements under the hood. Specifically we could still cause 100% CPU utilization on the NSX appliance by putting too many hosts into maintenance mode consecutively, however at the very least the CPU utilization was more likely able to recover on its own, without us needed to restart the service or appliance. Now why is that important you might ask? Being a service provider, you want to quickly and efficiently roll through your hosts while doing upgrades, and having something like this inefficiency in the NSX code base, can drastically extend maintenance windows. Unfortunately for us at the time, as VMware came out with the 6.2.8 maintenance patch after 6.3.x, so the fixes were also not apart of the 6.3.x release yet. KB2150668

As stated above, the instructions for restarting the bluelane-manager service on the NSX appliance is still something that is very handy to have.

You must be logged in to post a comment.