Quiet often when I connect to customer sites to conduct a health check, I sometimes find their hosts having different NTP settings, or not having NTP configured at all. Probably one of the easiest one-liners I keep in my virtual Rolodex of powerCLI one-liners, is the ability to check NTP settings of all hosts in the environment.

Checking NTP with Powercli

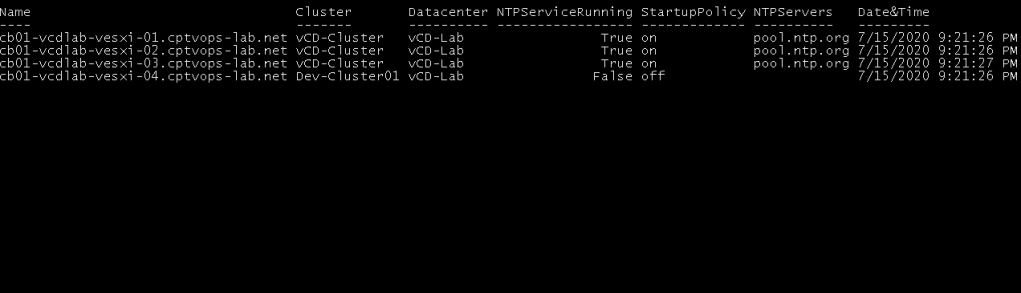

Get-VMHost | Sort-Object Name | Select-Object Name, @{N=”Cluster”;E={$_ | Get-Cluster}}, @{N=”Datacenter”;E={$_ | Get-Datacenter}}, @{N=“NTPServiceRunning“;E={($_ | Get-VmHostService | Where-Object {$_.key-eq “ntpd“}).Running}}, @{N=“StartupPolicy“;E={($_ | Get-VmHostService | Where-Object {$_.key-eq “ntpd“}).Policy}}, @{N=“NTPServers“;E={$_ | Get-VMHostNtpServer}}, @{N="Date&Time";E={(get-view $_.ExtensionData.configManager.DateTimeSystem).QueryDateTime()}} | format-table -autosize

With this rather lengthy command, I can get everything that is important to me.

We can see from the output that I have a single host in my Dev-Cluster that does not have NTP configured. Quiet often I find customers that have mis-configured NTP settings, do not make use of host profiles that can catch and address issues like this.

If you also wanted to see the incoming, outgoing and protocols settings, you could use the following:

Get-VMHost | Sort-Object Name | Select-Object Name, @{N=”Cluster”;E={$_ | Get-Cluster}}, @{N=”Datacenter”;E={$_ | Get-Datacenter}}, @{N=“NTPServiceRunning“;E={($_ | Get-VmHostService | Where-Object {$_.key-eq “ntpd“}).Running}}, @{N="IncomingPorts";E={($_ | Get-VMHostFirewallException | Where-Object {$_.Name -eq "NTP client"}).IncomingPorts}}, @{N="OutgoingPorts";E={($_ | Get-VMHostFirewallException | Where-Object {$_.Name -eq "NTP client"}).OutgoingPorts}}, @{N="Protocols";E={($_ | Get-VMHostFirewallException | Where-Object {$_.Name -eq "NTP client"}).Protocols}}, @{N=“StartupPolicy“;E={($_ | Get-VmHostService | Where-Object {$_.key-eq “ntpd“}).Policy}}, @{N=“NTPServers“;E={$_ | Get-VMHostNtpServer}}, @{N="Date&Time";E={(get-view $_.ExtensionData.configManager.DateTimeSystem).QueryDateTime()}} | format-table -autosize

Set NTP with Powercli

You can setup NTP on hosts with powercli as well. I have everything in my lab pointed to ‘pool.ntp.org’, so my example will use that.

code snippet

Get-VMHost | Add-VMHostNtpServer -NtpServer pool.ntp.org

Most likely you would have multiple corporate NTP servers you’d need to point to, and that is easily done by separating them with a comma. An example of having two: Instead of having just ‘pool.ntp.org’ I’d have ‘ntp-server01,ntp-server02’.

The next thing needed is the startup policy. VMware has three different options to choose from. on = Start and stop with host, automatic = start and stop with port usage, and off = start and stop manually. In my lab I have the policy set to on.

code snippet

Get-VMHost | Get-VmHostService | Where-Object {$_.key -eq "ntpd"} | Set-VMHostService -policy "on"

With that in mind, the following command I can make the NTP settings of all hosts consistent. This command assumes that I only have one NTP server. I am also stopping and starting the NTP service. It is also worth mentioning that each host that already has the ntp server ‘pool.ntp.org’, will throw a red error that the NtpServer already exists.

Get-VMHost | Get-VmHostService | Where-Object {$_.key -eq "ntpd"} | Stop-VMHostService -Confirm:$false; Get-VMHost | Get-VmHostService | Where-Object {$_.key -eq "ntpd"} | Set-VMHostService -policy "on"; Get-VMHost | Add-VMHostNtpServer -NtpServer pool.ntp.org; Get-VMHost | Get-VMHostFirewallException | Where-Object {$_.Name -eq "NTP client"} | Set-VMHostFirewallException -Enabled:$true; Get-VMHost | Get-VmHostService | Where-Object {$_.key -eq "ntpd"} | Start-VMHostService

Now NTP should be consistent across all hosts. Re-run the first command to validate.

You must be logged in to post a comment.