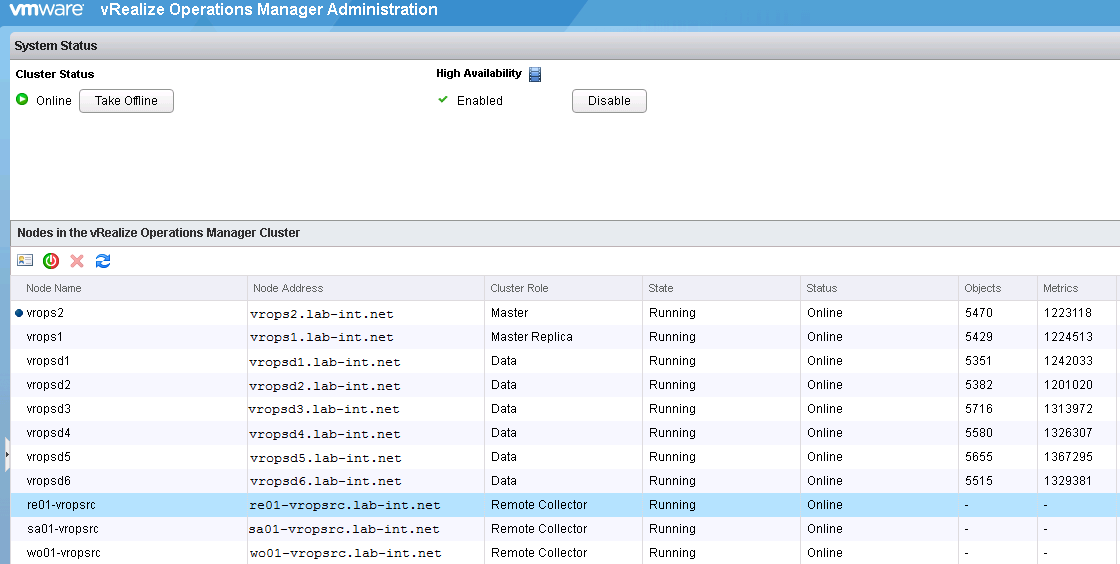

This post is not intended to be the traditional front-end health check on the appliance, and instead will focus on the back-end, specifically the Cassandra database on the data nodes. I decided to write this post due to the various issues I have encountered managing two large production deployments, with the largest containing 9 data nodes, and 3 remote collectors collecting and processing metrics north of 3,829,804.

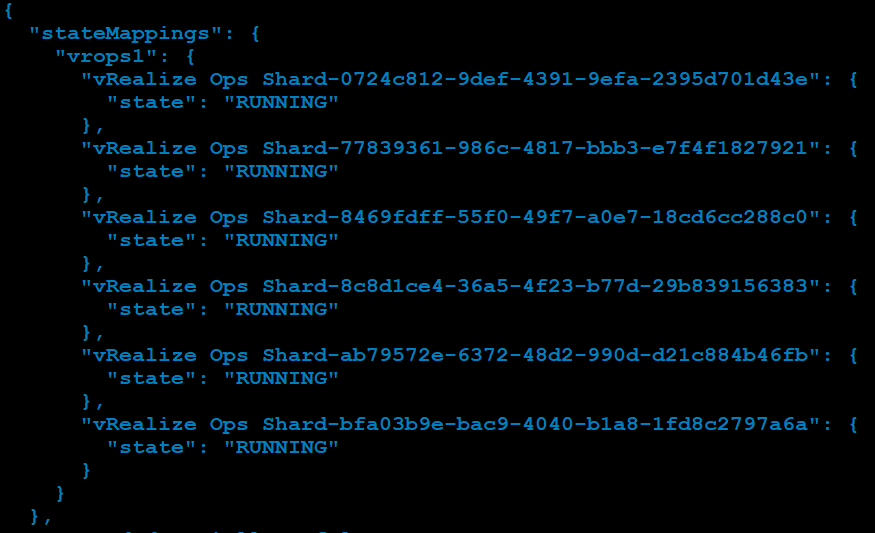

The first check we can do is on the database sync between the data nodes including the master and master replica. This can also be useful in determining unusual disk growth on one or more of the data nodes. Open a SSH session to the master appliance and issue the following command:

# $VMWARE_PYTHON_BIN /usr/lib/vmware-vcops/tools/vrops-platform-cli/vrops-platform-cli.py getShardStateMappingInfo

The sample output to be concerned with looks similar to the following example:

{

"stateMappings": {

"vrops1": {

"vRealize Ops Shard-0724c812-9def-4391-9efa-2395d701d43e": {

"state": "SYNCHING"

},

"vRealize Ops Shard-77839361-986c-4817-bbb3-e7f4f1827921": {

"state": "SYNCHING"

},

"vRealize Ops Shard-8469fdff-55f0-49f7-a0e7-18cd6cc288c0": {

"state": "RUNNING"

},

"vRealize Ops Shard-8c8d1ce4-36a5-4f23-b77d-29b839156383": {

"state": "RUNNING"

},

"vRealize Ops Shard-ab79572e-6372-48d2-990d-d21c884b46fb": {

"state": "RUNNING"

},

"vRealize Ops Shard-bfa03b9e-bac9-4040-b1a8-1fd8c2797a6a": {

"state": "OUT_OF_SYNC"

}

}

},

The “vRealize Ops Shard” refers to the data nodes including the master and master replica nodes. The available status’ are RUNNING, SYNCHING, BALANCING, OUT_OF_BALANCE, and OUT_OF_SYNC.

- States of RUNNING, SYNCHING and BALANCING are normal healthy states.

- OUT_OF_BALANCE and OUT_OF_SYNC status is cause for concern, and is enough to open an SR with VMware to have them take a look. But lets look a little deeper to see if there’s more going on here. It may be beneficial information to give to VMware’s GSS.

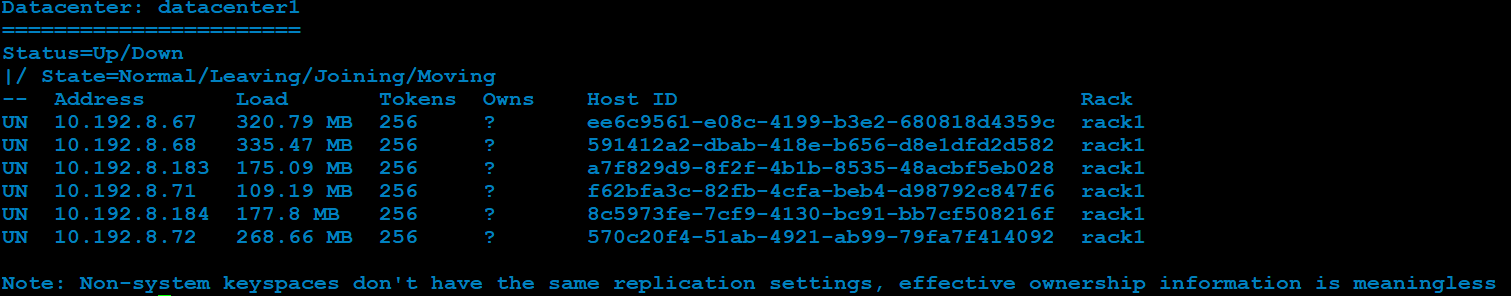

The vRealize Operations Manager appliance uses Apache Cassandra database, so with this next command, we will be looking at the database load using a Cassandra utility called node tool. This command is only gathering operational statistics from the database, so it is safe to run as we are not making any system changes here.

- A good time to use this utility is when you start getting alerts from various datanodes stating high load, or that cassandra DB service has crashed and has now recovered, or that the data node has disconnected and reconnected.

- I’ve also noticed this to be a cause for failed upgrades, or failed vrops cluster expansions.

# $VCOPS_BASE/cassandra/apache-cassandra-2.1.8/bin/nodetool --port 9008 status

This will return output similar to:

Datacenter: datacenter1

=======================

Status=Up/Down

|/ State=Normal/Leaving/Joining/Moving

-- Address Load Tokens Owns Host ID Rack

UN 192.2.3.6 80.08 GB 256 ? 232f646b-3fbc-4388-8962-34000c1bb48b rack1

UN 192.2.3.7 80.53 GB 256 ? 1bfec59b-3ba8-4ca0-942f-5bb2f97b7319 rack1

UN 192.2.3.4 80.11 GB 256 ? da6c672c-cc69-4284-a8f5-2775441bb113 rack1

UN 192.2.3.5 79.33 GB 256 ? ee4a3c3f-3f0f-46ac-b331-a816e8eb37c5 rack1

DN 192.2.3.3 75.13 GB 256 ? 19e80237-6f2c-4ff6-881e-ce94870e0ca5 rack1

Note: Non-system keyspaces don't have the same replication settings,

effective ownership information is meaningless

———————————————————————–

- The output to be concerned with here, is the load column. Under ideal operational conditions, I have been told this should be under 5GB of load per node. This command does not return data on the remote collectors because they do not contain a database.

- If database load is over 5GB, you will need to open an SR VMware GSS with this information, along with sending them the usual appliance log bundle. In this example, my data nodes had over 70 GB of load.

- If nodetool returns with an error: nodetool: Failed to connect to ‘127.0.0.1:9008’ – ConnectException: ‘Connection refused’, checkout this KB2144358 article. You may be able to get that node back online before calling GSS.

Concerning the database load, In most cases from my experience GSS would need to truncate the activity, results, and queueid tables, and then run a parallel nodetool repair command on all data nodes starting with the master in order to get the appliance’s feet back under it. I will detail those steps here as these are the steps usually performed:

- Take a snapshot of the nodes: master, master replica, data nodes (Remote Collectors can be skipped) to ensure no issues arise.

- Leave the cluster ONLINE

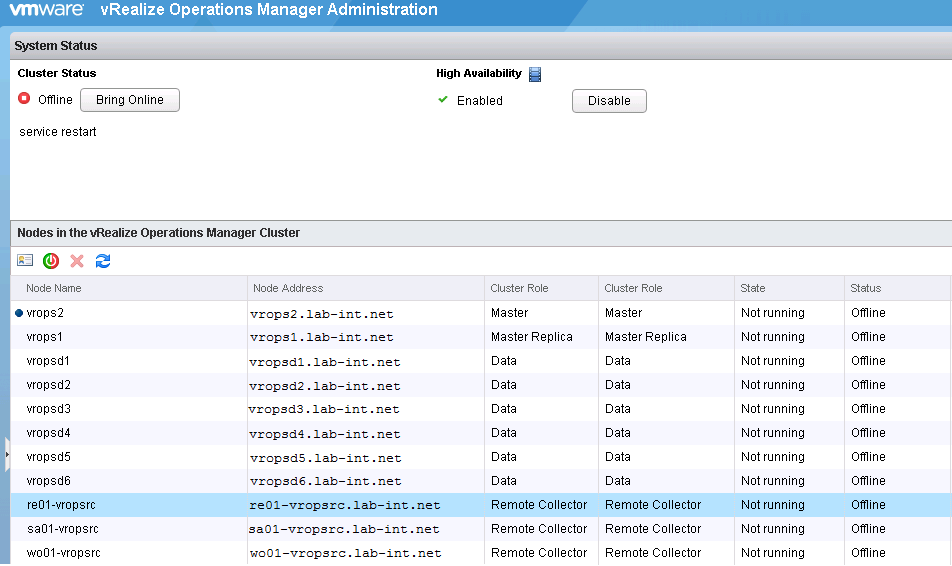

- Take analytics offline on the master and all data nodes:

- Perform this step in parallel. That is, execute this command on each node one-after-another without waiting for it to complete in a single terminal firstA simple for-loop calling ssh to the nodes to do this isn’t sufficient. It is best to ensure the master and data nodes are all going offline together.

# service vmware-vcops stop analytics

4. Repair the RESORUCE_STATE_DELETE flags for non-existing resources that are to be deleted:

- On the master node only execute:

# su - postgres -c "/opt/vmware/vpostgres/current/bin/psql -d vcopsdb -p 5433 -c \"update resource set resource_flag='RESOURCE_STATE_NORMAL' where resource_flag='RESOURCE_STATE_DELETE';\""

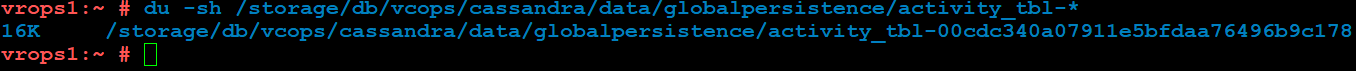

5. Perform Cassandra maintenance on the master node only. Afterword you will be running cassandra repair on the rest of the nodes that will sync up their databases with the master. There are a total of four commands here, so run them in order:

# $VCOPS_BASE/cassandra/apache-cassandra-2.1.8/bin/cqlsh --ssl --cqlshrc $VCOPS_BASE/user/conf/cassandra/cqlshrc -e "consistency quorum; truncate globalpersistence.activity_tbl"

# $VCOPS_BASE/cassandra/apache-cassandra-2.1.8/bin/cqlsh --ssl --cqlshrc $VCOPS_BASE/user/conf/cassandra/cqlshrc -e "consistency quorum; truncate globalpersistence.activityresults_tbl"

# $VCOPS_BASE/cassandra/apache-cassandra-2.1.8/bin/cqlsh --ssl --cqlshrc $VCOPS_BASE/user/conf/cassandra/cqlshrc -e "consistency quorum; truncate globalpersistence.queueid_tbl"

# $VCOPS_BASE/cassandra/apache-cassandra-2.1.8/bin/nodetool -p 9008 clearsnapshot

6. Perform Cassandra maintenance on all nodes:

- This is critical. The -pr option to the repair tool causes a subset of the token range to be coordinated from the node calling the nodetool. This improves performance however it is critical that ALL nodes in the Cassandra cluster perform this operation to get a complete and consistent repair. See <www.datastax.com_dev_blog_repair-cassandra >

- Execute this on the master and all data nodes simultaneously:

# $VCOPS_BASE/cassandra/apache-cassandra-2.1.8/bin/nodetool -p 9008 repair -par -pr

7. To monitor the repair progress, you can start another SSH session to the master node and tail the following log:

# tail -f /storage/log/vcops/log/cassandra/system.log

- The repair operation has two distinct phases. First it calculates the differences between the nodes (repair work to be done), and then it acts on those differences by streaming data to the appropriate nodes.

Generally speaking, you can also monitor the nodetool repair operation with these two nodetool commands, but this is not necessary:

- netstats: This monitors the repair streams to the nodes:

# $VCOPS_BASE/cassandra/apache-cassandra-2.1.8/bin/nodetool --port 9008 netstats

- compactionstats: This checks on the active Merkle Tree calculations:

# $VCOPS_BASE/cassandra/apache-cassandra-2.1.8/bin/nodetool --port 9008 compactionstats

8. Perform the instance metric key id clean-up on all nodes. Perform this step in parallel on the master and data nodes. This cleans up the disk on the nodes, as this cleans up the snapshots of Cassandra on each node:

# $VMWARE_PYTHON_BIN $VCOPS_BASE/tools/persistenceTool.py RemoveMatchedMetricKeys --outputFile /tmp/deleted_report.txt --regex "\"^diskspace-waste:.+?snapshot:.*\"" --remove true # $VMWARE_PYTHON_BIN $VCOPS_BASE/tools/persistenceTool.py RemoveMatchedMetricKeys --outputFile /tmp/deleted_report2.txt --regex "\"^diskspace:.+?snapshot:.*(accessTime|used)$\"" --remove true

9. Clean up the alarms & alerts on all nodes. Perform this step in parallel on the master and data nodes:

# su - postgres -c "/opt/vmware/vpostgres/9.3/bin/psql -p 5432 -U vcops -d vcopsdb -c 'truncate table alert cascade;'"

# su - postgres -c "/opt/vmware/vpostgres/9.3/bin/psql -p 5432 -U vcops -d vcopsdb -c 'truncate table alarm cascade;'"

10. Bring the analytics processes back online. Execute this step on the master, master replica and data nodes. You may use a ssh for-loop and execute these commands sequentially:

# service vmware-vcops start analytics

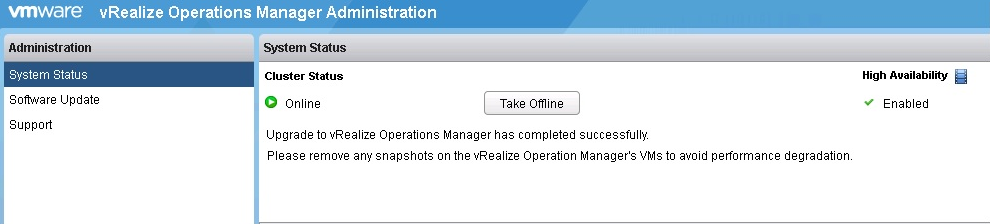

- I have seen the cluster to take 20 to 30 minutes to come back online (from my experience with a 9+ node large cluster).

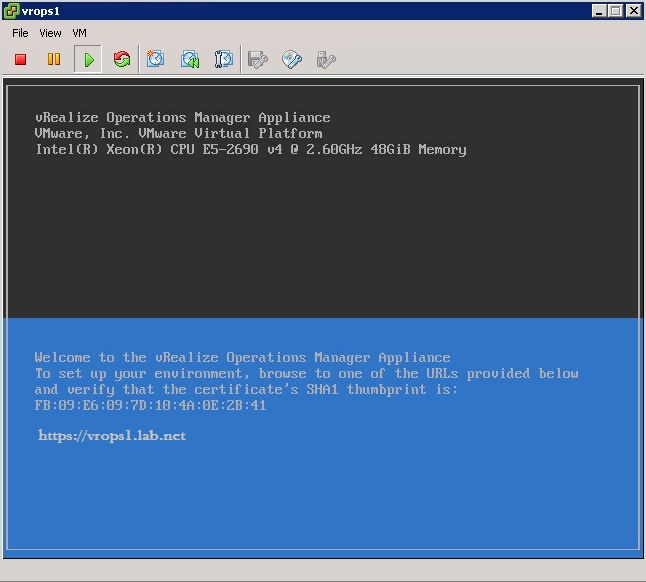

- If you log into the https://<vrops>/admin page, you will see that the HA status is degraded, or needs to be re-enabled. Give the appliance time as it will reset itself back to a healthy green state, once fully online.

11. Once the cluster is fully online and you can confirm the data is being collected, delete the snapshots you took earlier.

12. On the master node, if you again run the command:

# $VMWARE_PYTHON_BIN /usr/lib/vmware-vcops/tools/vrops-platform-cli/vrops-platform-cli.py getShardStateMappingInfo

You should see something similar to:

{

"stateMappings": {

"vrops1": {

"vRealize Ops Shard-0724c812-9def-4391-9efa-2395d701d43e": {

"state": "SYNCHING"

},

"vRealize Ops Shard-77839361-986c-4817-bbb3-e7f4f1827921": {

"state": "SYNCHING"

},

"vRealize Ops Shard-8469fdff-55f0-49f7-a0e7-18cd6cc288c0": {

"state": "RUNNING"

},

"vRealize Ops Shard-8c8d1ce4-36a5-4f23-b77d-29b839156383": {

"state": "SYNCHING"

},

"vRealize Ops Shard-ab79572e-6372-48d2-990d-d21c884b46fb": {

"state": "SYNCHING"

},

"vRealize Ops Shard-bfa03b9e-bac9-4040-b1a8-1fd8c2797a6a": {

"state": "SYNCHING"

}

}

},

13. On the master node, if you again run the nodetool status command

# $VCOPS_BASE/cassandra/apache-cassandra-2.1.8/bin/nodetool --port 9008 status

You should see something similar to:

Datacenter: datacenter1

=======================

Status=Up/Down

|/ State=Normal/Leaving/Joining/Moving

-- Address Load Tokens Owns Host ID Rack

UN 192.2.3.6 120.20 MB 256 ? 232f646b-3fbc-4388-8962-34000c1bb48b rack1

UN 192.2.3.7 128.20 MB 256 ? 1bfec59b-3ba8-4ca0-942f-5bb2f97b7319 rack1

UN 192.2.3.4 120.11 MB 256 ? da6c672c-cc69-4284-a8f5-2775441bb113 rack1

UN 192.2.3.5 115.33 MB 256 ? ee4a3c3f-3f0f-46ac-b331-a816e8eb37c5 rack1

DN 192.2.3.3 128.13 MB 256 ? 19e80237-6f2c-4ff6-881e-ce94870e0ca5 rack1

Note: Non-system keyspaces don't have the same replication settings,

effective ownership information is meaningless

- The ideal situation here is that now the Cassandra DB load should be around 1GB or less

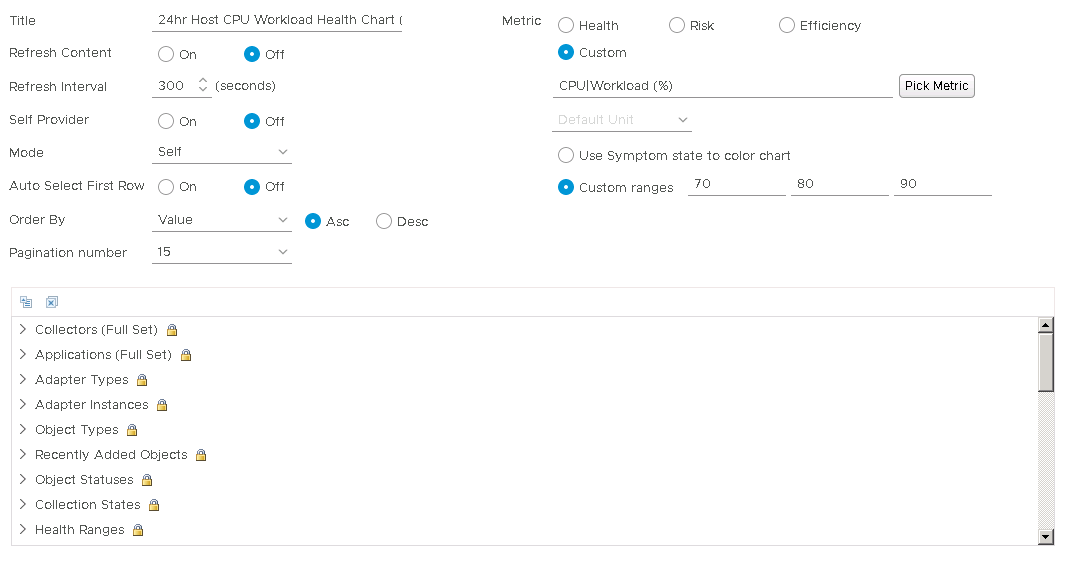

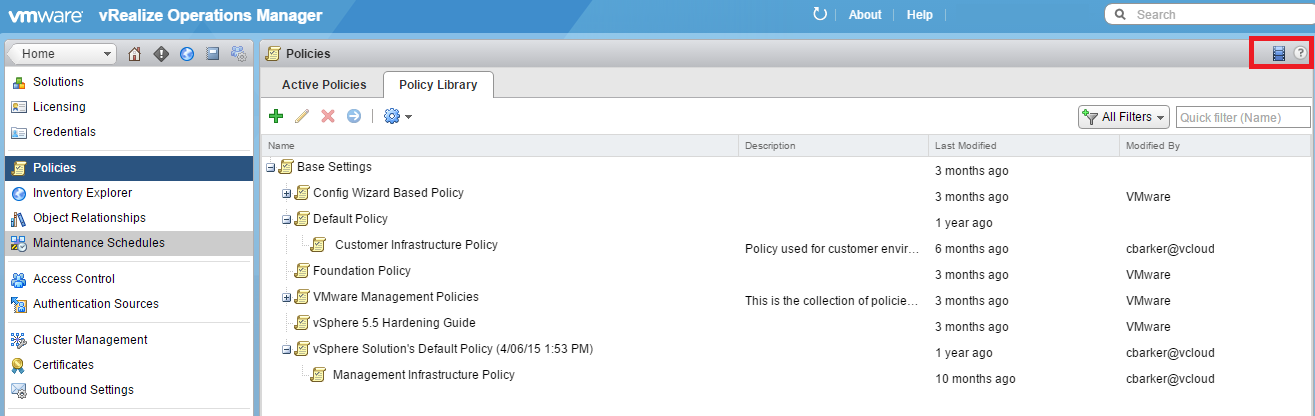

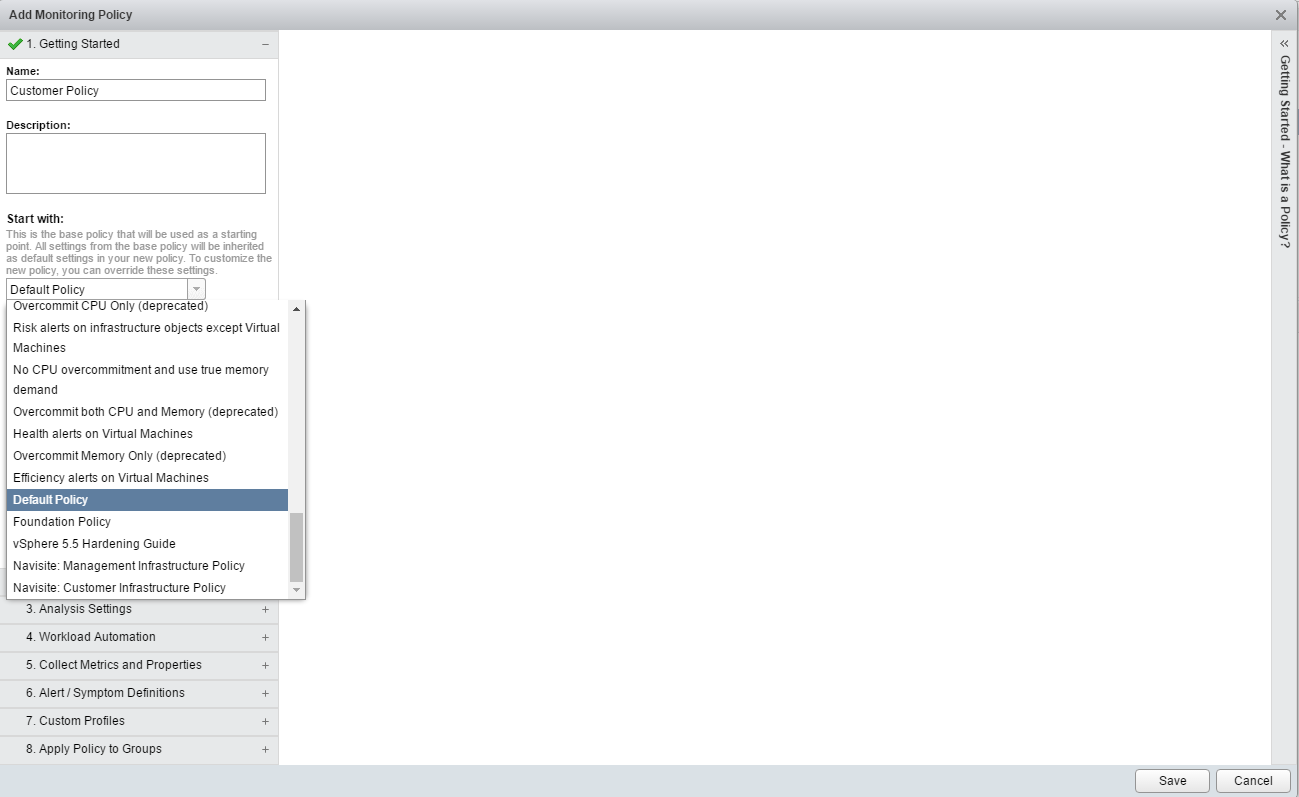

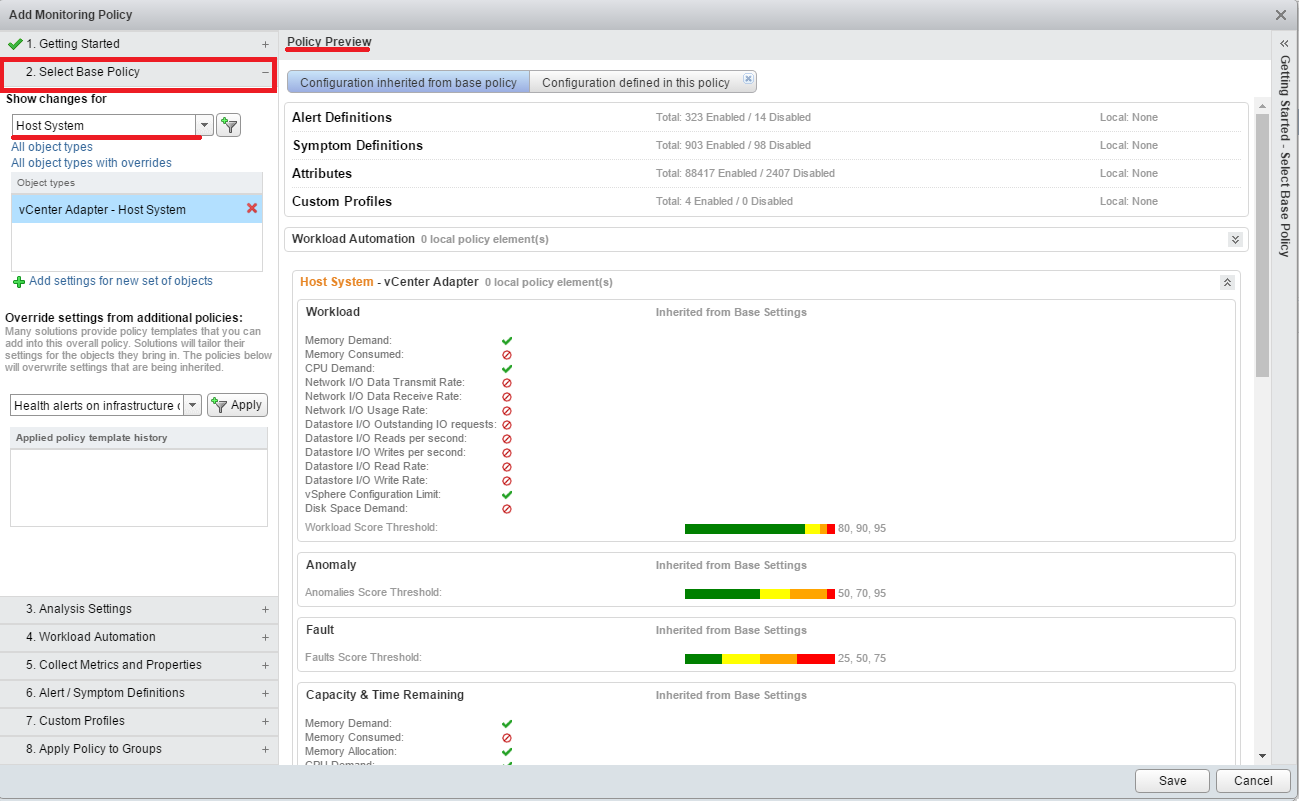

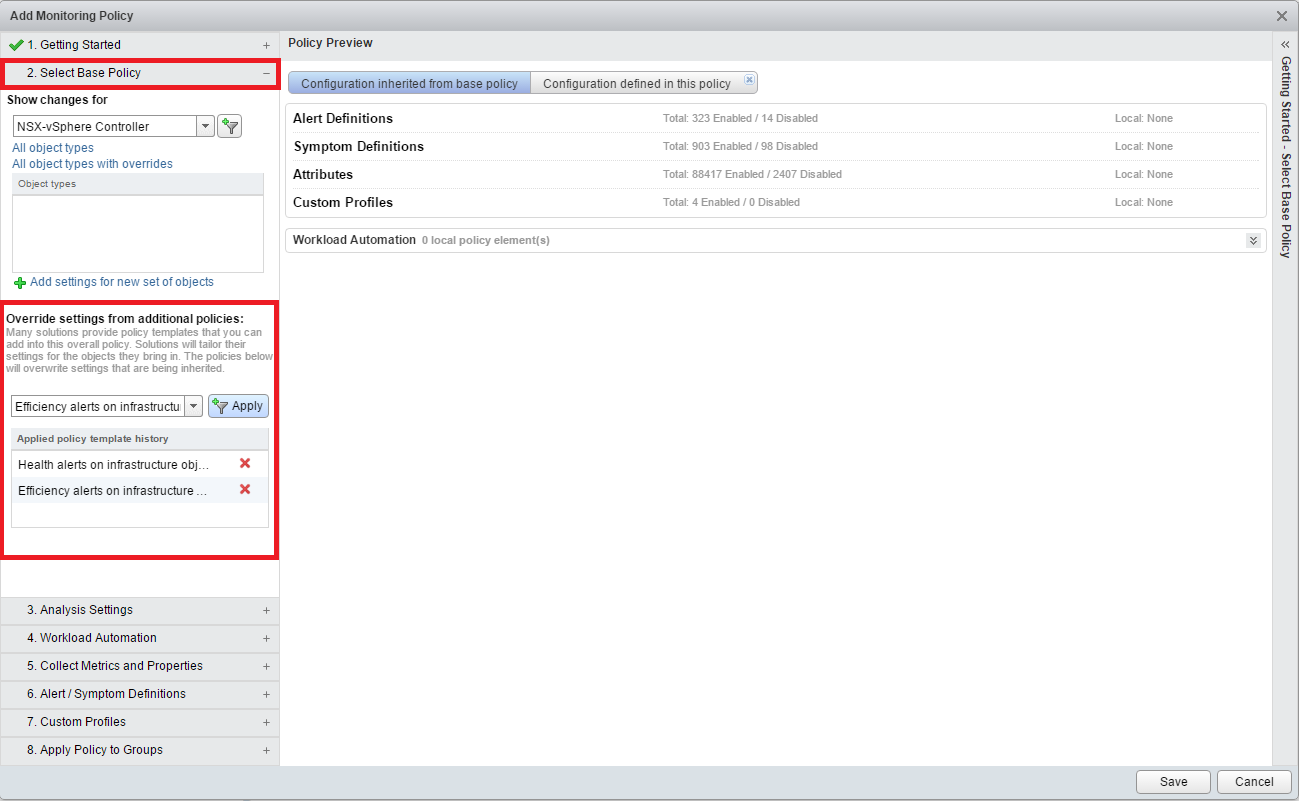

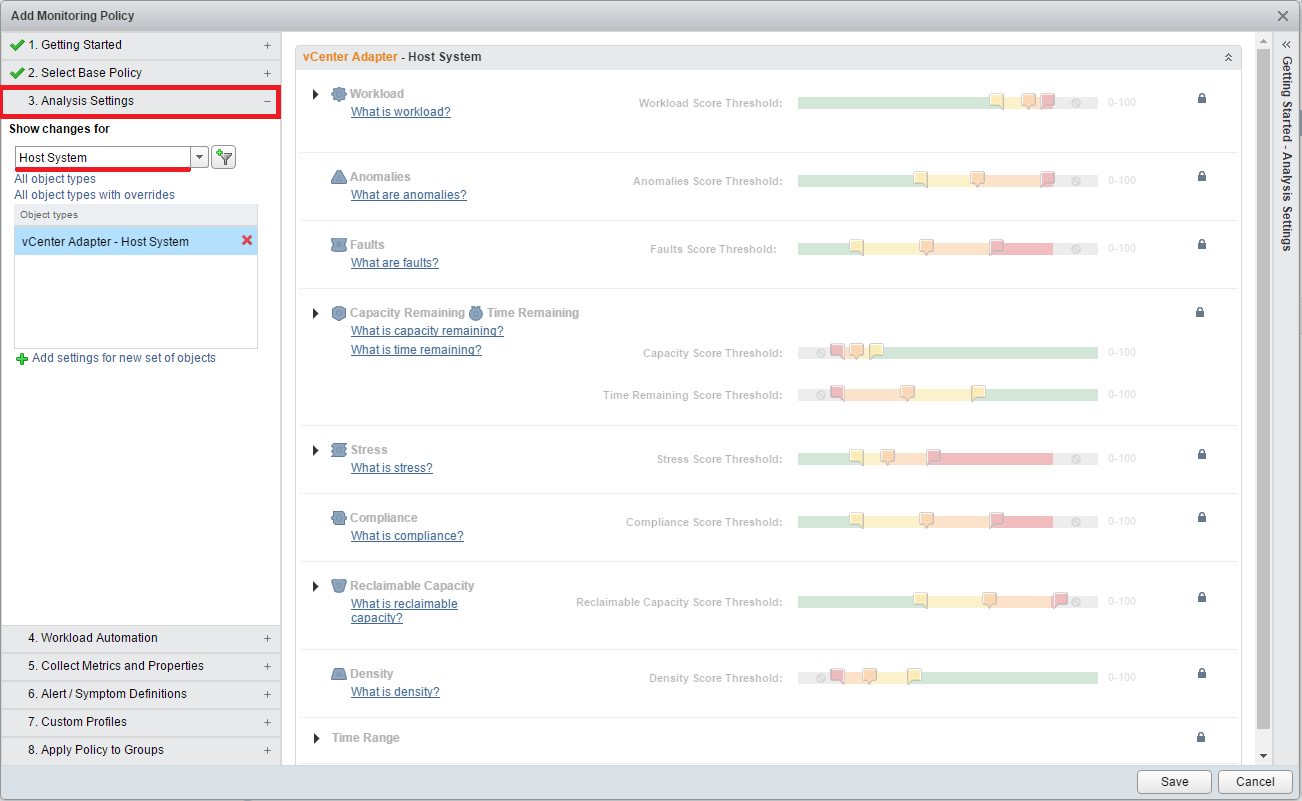

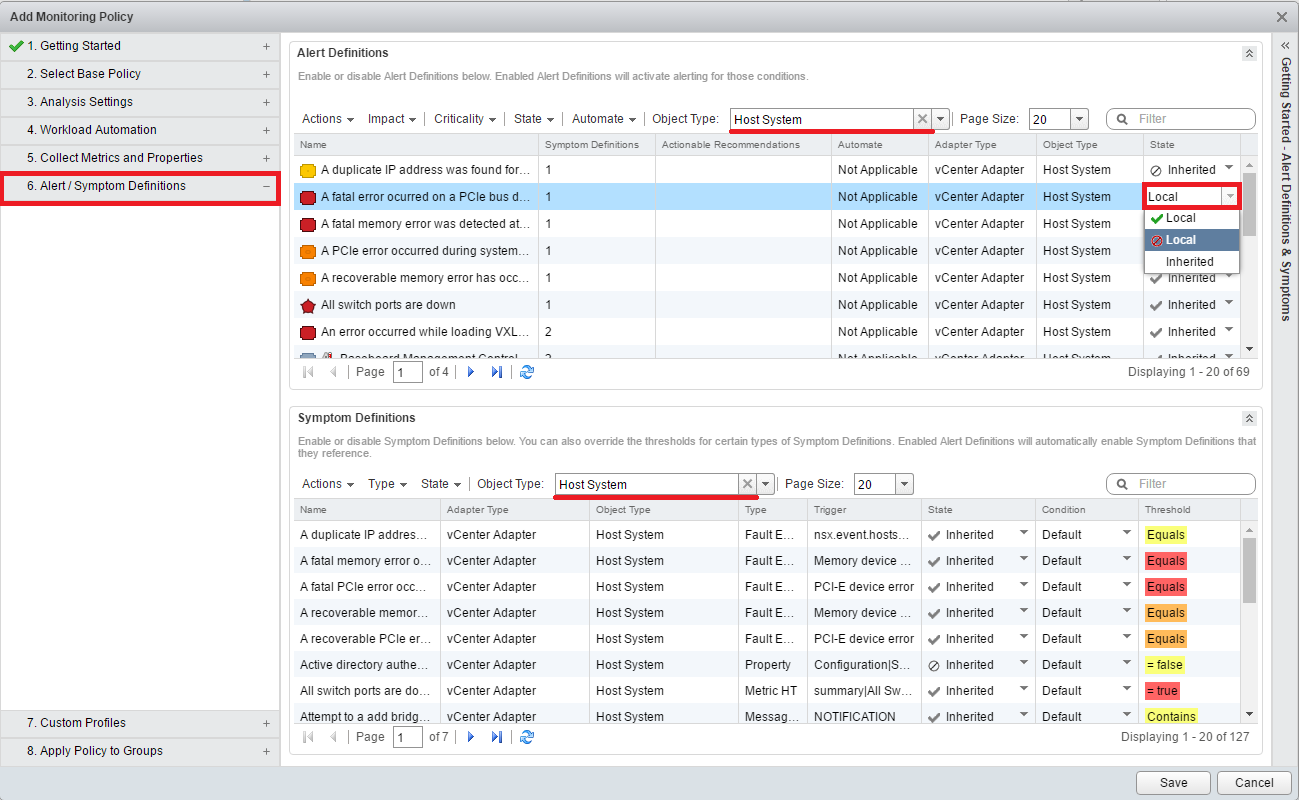

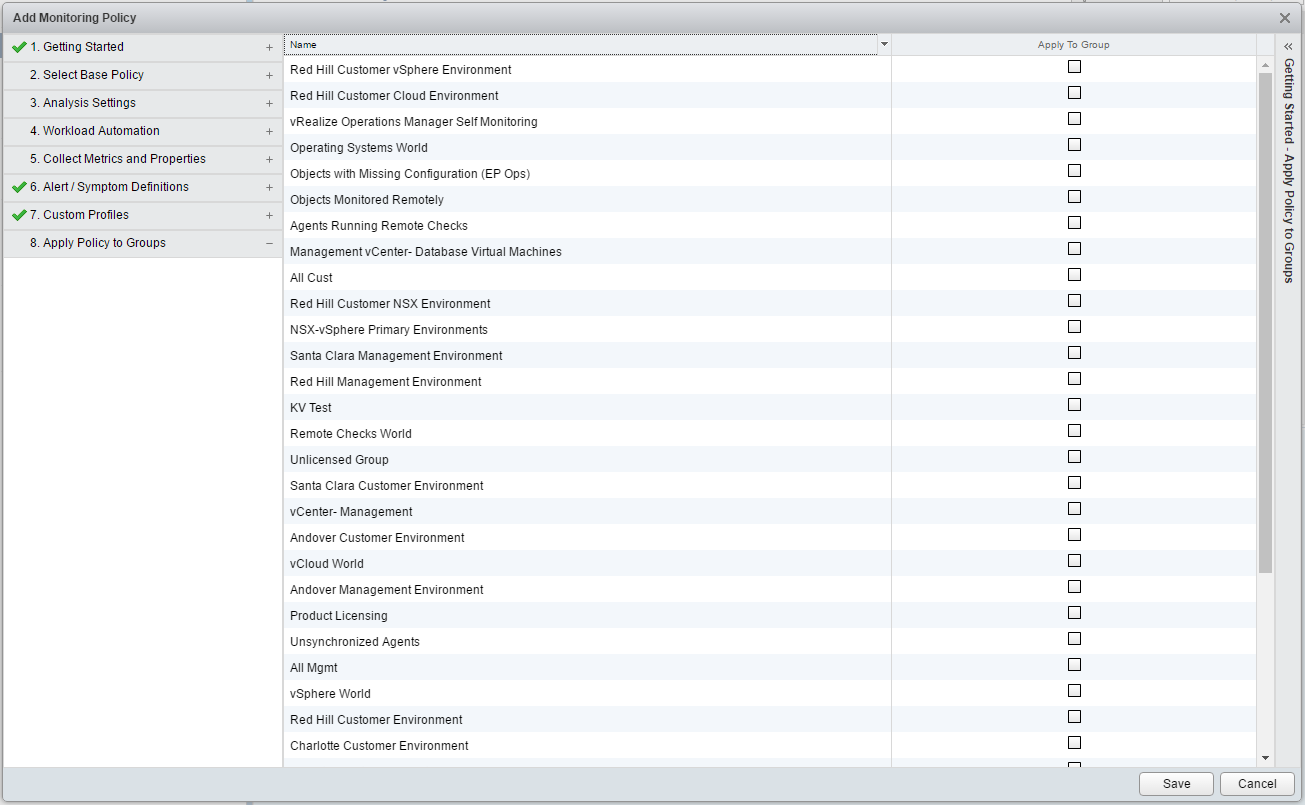

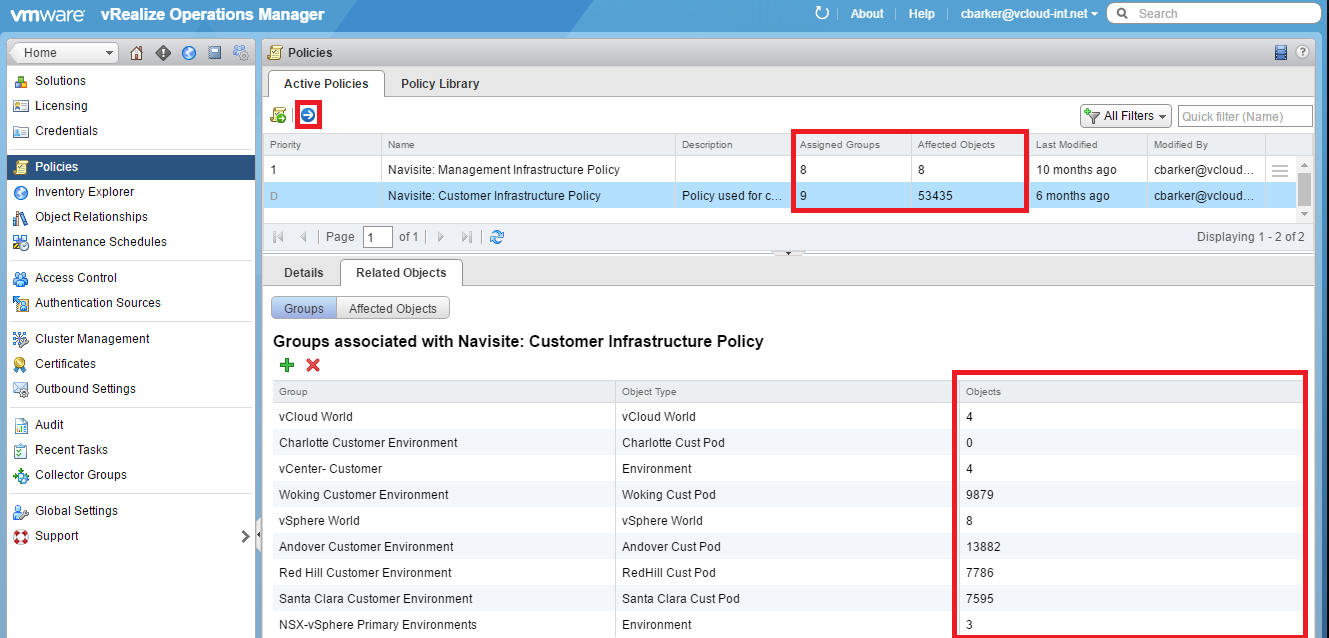

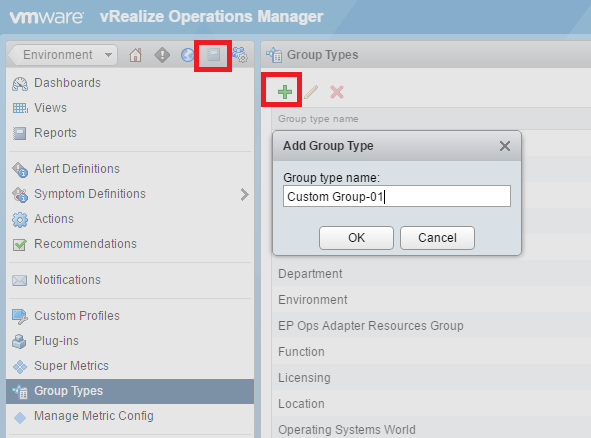

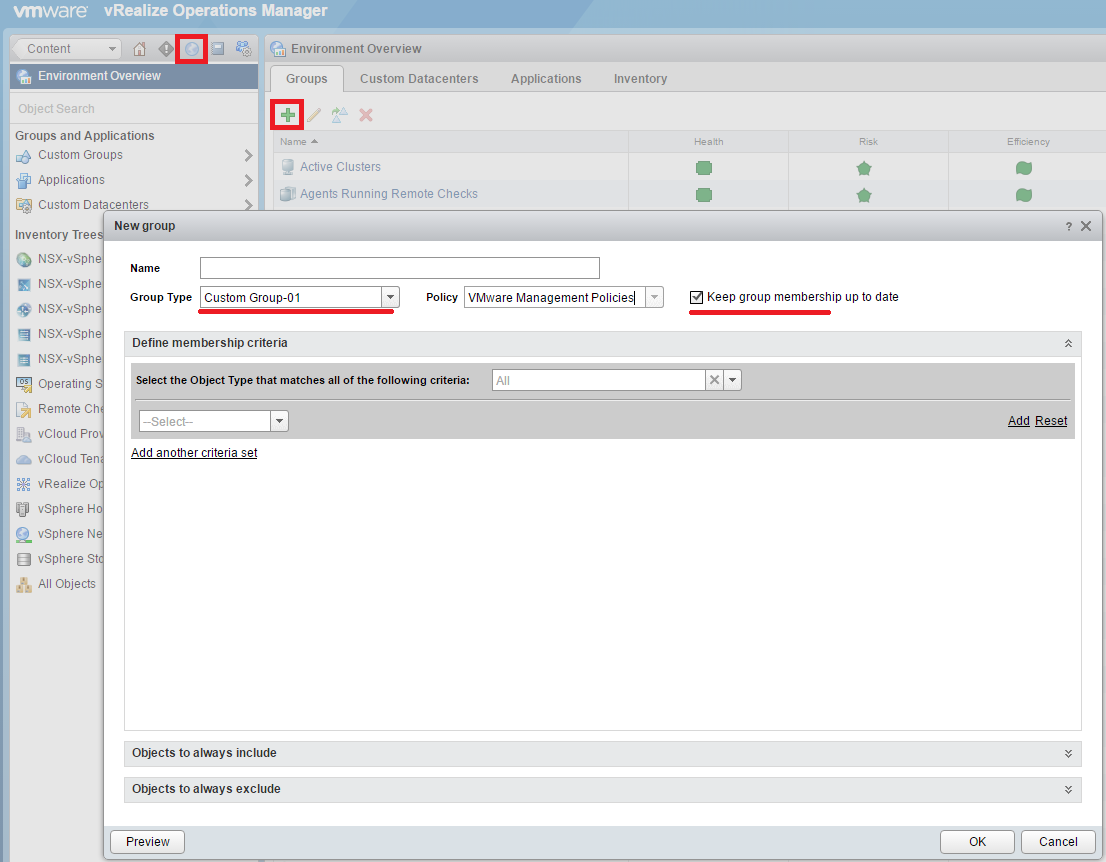

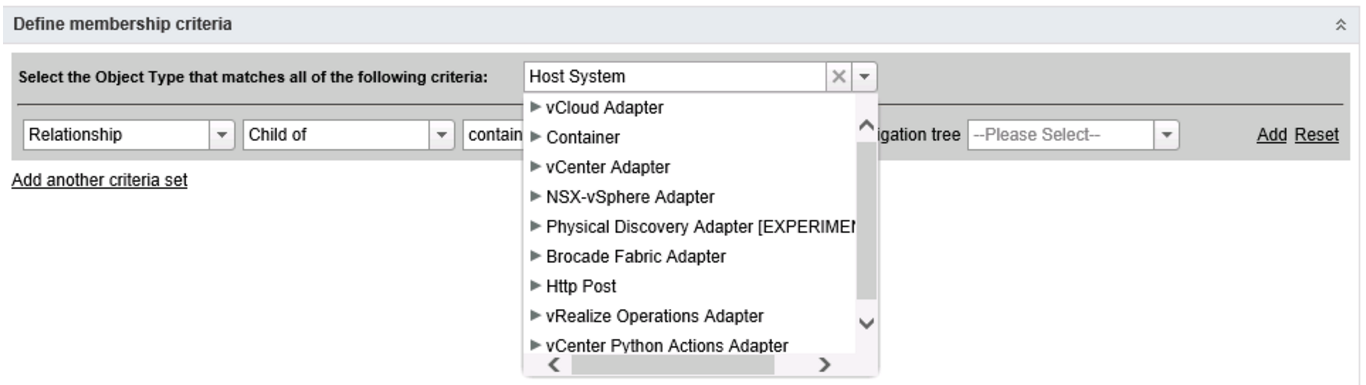

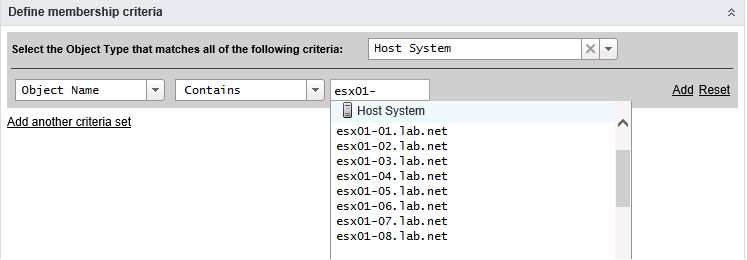

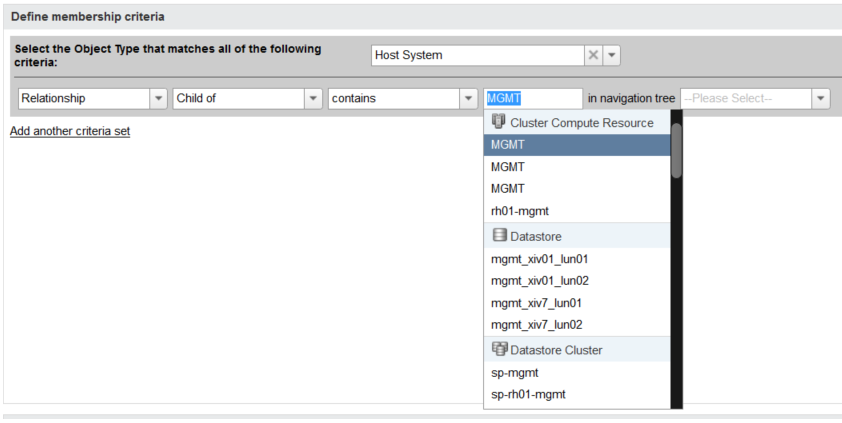

14. Log into the regular web interface and edit the policy to stop collections on snapshot metrics. This will help in overall performance going forward.

You must be logged in to post a comment.